SecureCloud Hub. Real implementation details behind the Azure-native zero-trust file sharing platform.

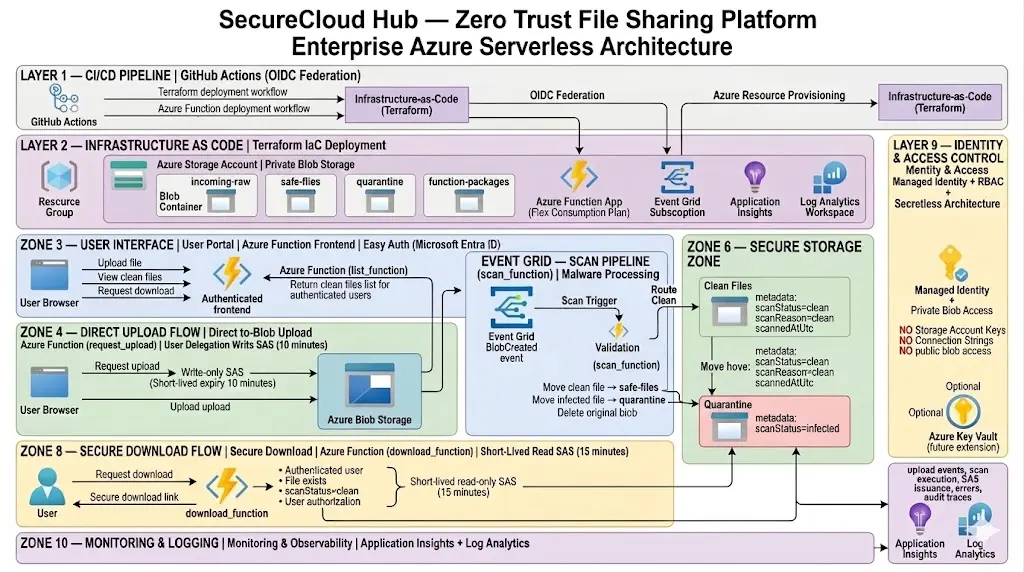

This page documents the real implementation behind SecureCloud Hub: direct-to-Blob upload SAS, private storage containers, Event Grid-triggered malware scanning, user-scoped secure downloads, Terraform-managed Azure infrastructure, and observability through Application Insights, Function logs, and storage audit telemetry.

Browser → request_upload_function → incoming-raw → Event Grid → scan_function → safe-files / quarantine → download_function → read-only SAS → client download

Production-style Azure serverless workflow.

This architecture summarizes the real implementation behind SecureCloud Hub — from OIDC-based deployments and Terraform-managed infrastructure to authenticated uploads, Event Grid malware scanning, secure file isolation, and short-lived SAS-based downloads.

End-to-end secure file workflow

A high-level view of the platform flow across deployment, identity, storage, malware validation, secure downloads, and monitoring.

- GitHub Actions authenticates to Azure using OIDC for passwordless Terraform and Function deployments.

- Authenticated users upload directly to private Blob Storage using short-lived write SAS tokens.

- Event Grid triggers the malware scanning pipeline and routes files to clean or quarantine storage.

- download_function validates identity, file ownership, and clean scan metadata before issuing read-only SAS access.

Explore the implementation

Switch between Python Functions, Terraform IaC, CI/CD, observability, and the malware pipeline to see how each part of SecureCloud Hub works in the final implementation.

Python – download_function

The download function is the final access gate. It parses the authenticated Easy Auth principal, derives the

real user namespace, verifies the file exists inside safe-files, checks that the blob metadata

shows scanStatus=clean, and only then generates a 15-minute read-only user delegation SAS URL.

import base64

import datetime

import json

import logging

import os

import azure.functions as func

from azure.identity import DefaultAzureCredential

from azure.storage.blob import BlobSasPermissions, BlobServiceClient, generate_blob_sas

logger = logging.getLogger(__name__)

def parse_easy_auth_principal(req: func.HttpRequest) -> dict:

principal_header = req.headers.get("X-MS-CLIENT-PRINCIPAL")

if not principal_header:

return {}

try:

decoded = base64.b64decode(principal_header).decode("utf-8")

principal = json.loads(decoded)

claims = {c["typ"]: c["val"] for c in principal.get("claims", [])}

return {

"user_id": claims.get(

"http://schemas.xmlsoap.org/ws/2005/05/identity/claims/emailaddress",

claims.get("preferred_username", "unknown"),

).lower(),

"name": claims.get("name", "unknown"),

}

except Exception as exc:

logger.warning("Failed to parse Easy Auth principal: %s", str(exc))

return {}

def is_valid_filename(filename: str) -> bool:

if not filename:

return False

if "/" in filename or "\\" in filename or ".." in filename:

return False

return True

def main(req: func.HttpRequest) -> func.HttpResponse:

principal = parse_easy_auth_principal(req)

user_id = principal.get("user_id", "unknown")

if user_id == "unknown":

logger.warning("Missing or invalid authenticated user.")

return func.HttpResponse("Unauthorized.", status_code=401)

file_name = req.params.get("fileName")

if not file_name:

logger.warning("Missing fileName. user=%s", user_id)

return func.HttpResponse("fileName query parameter is required.", status_code=400)

if not is_valid_filename(file_name):

logger.warning("Rejected invalid fileName. user=%s file=%s", user_id, file_name)

return func.HttpResponse("Invalid file name.", status_code=400)

storage_url = os.environ["STORAGE_ACCOUNT_URL"]

safe_container = os.environ["SAFE_CONTAINER"]

blob_name = f"{user_id}/{file_name}"

credential = DefaultAzureCredential()

blob_service = BlobServiceClient(account_url=storage_url, credential=credential)

blob_client = blob_service.get_blob_client(container=safe_container, blob=blob_name)

try:

properties = blob_client.get_blob_properties()

except Exception:

logger.warning("Blob not found. user=%s file=%s blob=%s", user_id, file_name, blob_name)

return func.HttpResponse("File not found.", status_code=404)

metadata = properties.metadata or {}

scan_status = metadata.get("scanstatus", metadata.get("scanStatus", "unknown"))

if scan_status != "clean":

logger.warning(

"Denied SAS issuance. user=%s file=%s blob=%s scan_status=%s",

user_id,

file_name,

blob_name,

scan_status,

)

return func.HttpResponse("File is not available for download.", status_code=403)

now = datetime.datetime.now(datetime.timezone.utc)

expiry = now + datetime.timedelta(minutes=15)

delegation_key = blob_service.get_user_delegation_key(

key_start_time=now,

key_expiry_time=expiry,

)

account_name = storage_url.split("//", 1)[1].split(".", 1)[0]

sas_token = generate_blob_sas(

account_name=account_name,

container_name=safe_container,

blob_name=blob_name,

user_delegation_key=delegation_key,

permission=BlobSasPermissions(read=True),

expiry=expiry,

)

sas_url = f"{storage_url}/{safe_container}/{blob_name}?{sas_token}"Terraform – storage account, containers, lifecycle, and CORS

The storage layer is fully managed by Terraform. The account is private, versioned, lifecycle-managed, and includes CORS to allow the authenticated frontend hosted on the Function App to upload directly to Blob Storage using short-lived SAS URLs.

# ============================================================

# storage.tf

# Storage account + private containers + lifecycle rules

# ============================================================

resource "azurerm_storage_account" "main" {

name = "st${var.project_name}${var.environment}001"

resource_group_name = azurerm_resource_group.main.name

location = azurerm_resource_group.main.location

account_tier = "Standard"

account_replication_type = "LRS"

allow_nested_items_to_be_public = false

min_tls_version = "TLS1_2"

https_traffic_only_enabled = true

shared_access_key_enabled = true

blob_properties {

versioning_enabled = true

delete_retention_policy {

days = 30

}

container_delete_retention_policy {

days = 30

}

cors_rule {

allowed_origins = [

"https://${local.function_app_name}.azurewebsites.net"

]

allowed_methods = ["GET", "PUT", "OPTIONS"]

allowed_headers = ["*"]

exposed_headers = ["*"]

max_age_in_seconds = 3600

}

}

tags = local.common_tags

}

resource "azurerm_storage_container" "incoming_raw" {

name = "incoming-raw"

storage_account_id = azurerm_storage_account.main.id

container_access_type = "private"

}

resource "azurerm_storage_container" "safe_files" {

name = "safe-files"

storage_account_id = azurerm_storage_account.main.id

container_access_type = "private"

}

resource "azurerm_storage_container" "quarantine" {

name = "quarantine"

storage_account_id = azurerm_storage_account.main.id

container_access_type = "private"

}

resource "azurerm_storage_container" "function_packages" {

name = "function-packages"

storage_account_id = azurerm_storage_account.main.id

container_access_type = "private"

}CI/CD – GitHub Actions with OIDC

The pipeline uses OpenID Connect to authenticate GitHub Actions to Azure without storing a long-lived client secret. One workflow applies Terraform and another publishes the Function App.

Infrastructure pipeline:

- Azure login via OIDC

- Terraform init / validate / plan / apply

- Remote state in Azure Blob Storage

Application pipeline:

- Azure login via OIDC

- Python setup

- Function App publish

- Runtime verification

Security outcome:

- No stored client secret in GitHub

- Azure trusts GitHub's OIDC token for the specific repo / branch

- Infrastructure and application deployments stay separatedKQL – scan lifecycle query

This query surfaces the full scan lifecycle from trigger to completion using the real trace messages emitted by

scan_function.

AppTraces

| where TimeGenerated > ago(24h)

| where Message has_any (

"Scan triggered",

"Scan completed",

"Uploaded scanned blob successfully",

"Deleted original blob from incoming container"

)

| project TimeGenerated, Message, SeverityLevel

| order by TimeGenerated descKQL – function runtime query

This query shows Function App runtime messages across the environment, useful for confirming executions, errors, and host-level activity.

FunctionAppLogs

| where TimeGenerated > ago(24h)

| project TimeGenerated, Level, HostInstanceId, Message

| order by TimeGenerated descKQL – storage audit query

This query captures the storage-layer side of the workflow: uploads, copies, and blob reads against the containers involved in the pipeline.

StorageBlobLogs

| where TimeGenerated > ago(24h)

| where OperationName in ("PutBlob", "PutBlockList", "CopyBlob", "GetBlob")

| project TimeGenerated, OperationName, ObjectKey, CallerIpAddress, AuthenticationType, StatusCode

| order by TimeGenerated descMalware pipeline – clean path

This is the real clean-file outcome tested in the project.

incoming-raw/kql-test-clean.txt

→ scanStatus = clean

→ uploaded to safe-files

→ original blob deleted from incoming-rawMalware pipeline – infected path

This is the real infected-file outcome tested using the EICAR test string.

incoming-raw/kql-test-infected.txt

→ scanStatus = infected

→ uploaded to quarantine

→ original blob deleted from incoming-rawMalware pipeline – execution sequence

This sequence matches the actual processing steps recorded by the scan pipeline from blob creation through final container placement.

Blob uploaded

→ Event Grid triggered

→ scan_function executed

→ blob downloaded

→ scan completed

→ destination container selected

→ scanned blob uploaded

→ original blob deleted